-

- Sopto Home

-

- Special Topic

-

- PCI-E Card Knowledge

-

- Performance of System Interconnect Using PCI Express Switches

PCI-E Card Knowledge

- Info about Network Interface Card Teaming

- How to Setup a Server with Multiple Network Interface Adapters?

- How to Reconnect an Internet Network Adapter for an Acer Aspire?

- 9 Things to Do When Your Internal Network Card Stops Working

- Ethernet Standards NIC for Home Networking

- What Is a Network Interface Adapter?

- How to Configure a Network Interface Card in Linux?

- How should Configure Your NIC for ISA and TMG?

- Recommended Network Card Configuration for Forefront UAG Servers

SOPTO Special Topic

Certificate

Guarantee

Except products belongs to Bargain Shop section, all products are warranted by SOPTO only to purchasers for resale or for use in business or original equipment manufacturer, against defects in workmanship or materials under normal use (consumables, normal tear and wear excluded) for one year after date of purchase from SOPTO, unless otherwise stated...

Return Policies

Defective products will be accepted for exchange, at our discretion, within 14 days from receipt. Buyer might be requested to return the defective products to SOPTO for verification or authorized service location, as SOPTO designated, shipping costs prepaid. .....

Applications

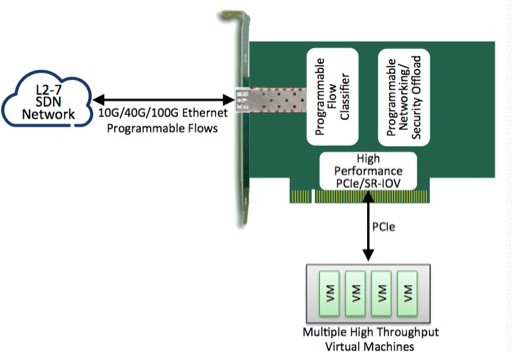

PCI-E NIC Cards provide redundant connectivity to ensure an uninterrupted network connection.

PCI-E NIC Cards are ideal for VM environments with multiple operating systems, requiring shared or dedicated NICs.

They are specially designed for desktop PC clients, servers, and workstations with few PCI Express slots available.

SOPTO Products

- Fiber Optic Transceiver Module

- High Speed Cable

- Fiber Optical Cable

- Fiber Optical Patch Cords

- Splitter CWDM DWDM

- PON Solution

- FTTH Box ODF Closure

- PCI-E Network Card

- Network Cables

- Fiber Optical Adapter

- Fiber Optical Attenuator

- Fiber Media Converter

- PDH Multiplexers

- Protocol Converter

- Digital Video Multiplexer

- Fiber Optical Tools

- Compatible

Related Products

Performance Feature

PCI-E Card Knowledge

Recommended

Performance of System Interconnect Using PCI Express Switches

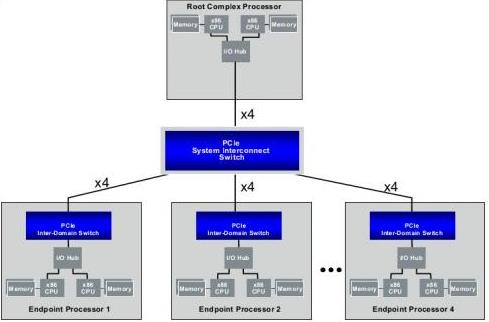

System Description

A multi-peer system topology is shown in Figure 1.A x4 PCIe interface is used to connect each Root Processor and Endpoint Processors to the PES64H16 System Interconnect PCIe switch. This is the topology that is used to measure the system data transfer performance.

A PES16NT2 is used to provide the NTB function in order to connect a x86-based Endpoint Processor to a downstream port of a PES64H16 PCIe switch. The System Interconnect software provides a virtual Ethernet over PCIe interface. The Linux Operation System (OS) detects a network interface and “sees” an Ethernet interface. The Linux OS sends Ethernet packets to the PCIe interface as if it were an Ethernet interface. The PCIe interface is hidden from the LinuxOS as far as data transfer is concerned. All current networking protocol stacks, such as TCP/IP protocol stack, and user applications that are able to run on top of the TCIP/IP stack will work without any modification.

Figure 1 System Interconnect Topology

System data transfer performance for PCIe System Interconnect has been presented in this application note. The network performance benchmark software netperf is used to measure performance. The performance is compared to the performance of a loopback test and 10 GE.

For the AMD system, the effective data transfer rate is between 3 and 3.5 Gbps for data sizes between 1K and 16K bytes. The data rate is about 2.5 Gbps for a data size of 512 bytes.

For the Bensley system, the effectively data transfer rate is about 5 Gbps for data sizes of 16K to 2K bytes. The effective data transfer rate is about 4 Gbps and 3 Gbps for data sizes of 1K and 512 bytes respectively. Data transfer rates are similar to the 10GE interface. The performance for Bensley is much better than AMD because Bensley supports a DMA engine to transfer data. The DMA engine can transfer data more efficiently and frees up CPU cycles from copying data to do more data transfer processing.

.jpg)

Gigabit EF Dual Port Server Adapter

It is expected that for large amounts of data transfer, the data size is likely to be large, such as 4K to 8K bytes. In practice, it is expected that the effective data transfer rate for PCIe System Interconnect is about 5 Gbps for Bensley and 3.5 Gbps for AMD.

In general, protocol encapsulation overhead reduces the effective bandwidth. However, it has been shown that the reduction in bandwidth is approximately 1-2% for large data sizes. The increase in band-width by reducing protocol encapsulation overhead is negligible. However, removing the TCP/IP protocol stack in the data transfer results in a significant reduction of CPU cycles and frees the CPU to do more data transfer processing.

For more info about PCI Express and optical fiber communication, please browse our website or contact a Sopto representative by calling 86-755-36946668, or by sending an email to info@sopto.com.